Kodak invented the digital camera and still went bankrupt. The consulting giants are staring at the same fork in the road.

1975 isn't a year you associate with selfies. But that's when Steve Sasson, a Kodak engineer, built the world's first digital camera. It was clunky, shot in black and white, and stored images on a cassette tape. It was also, by any measure, the future.

Kodak's executives buried it. A digital camera would eat into film sales, their $10 billion cash cow. Why cannibalise yourself when the money is still rolling in? Two decades later, Sony, Canon, and Nikon owned the digital photography market. Kodak filed for bankruptcy in 2012. The technology that could have saved the company was killed by the people running it.

That exact playbook is now repeating in enterprise software. Except the stakes are roughly 50 times larger.

The biggest forced migration in decades

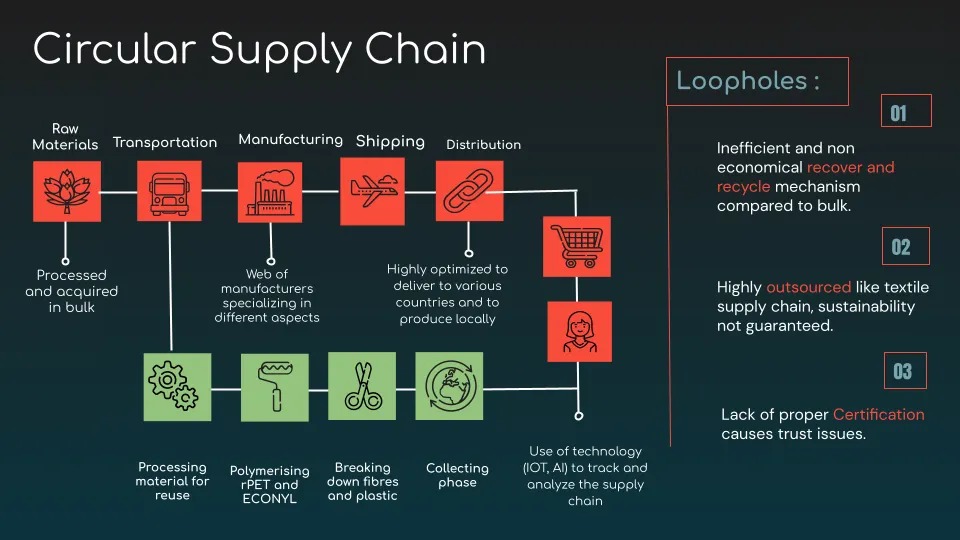

SAP's legacy ERP system, called ECC, is the operational backbone of over 35,000 companies globally — 60% of the Fortune 500 among them. It runs their supply chains, finance, procurement, HR. EVERYTHING.

SAP has set a hard end-of-support deadline: December 2027, with extended maintenance stretching to 2030. After that, no security patches, no compliance updates, no fixes. Every one of those companies needs to migrate to S/4HANA, SAP's next-generation platform.

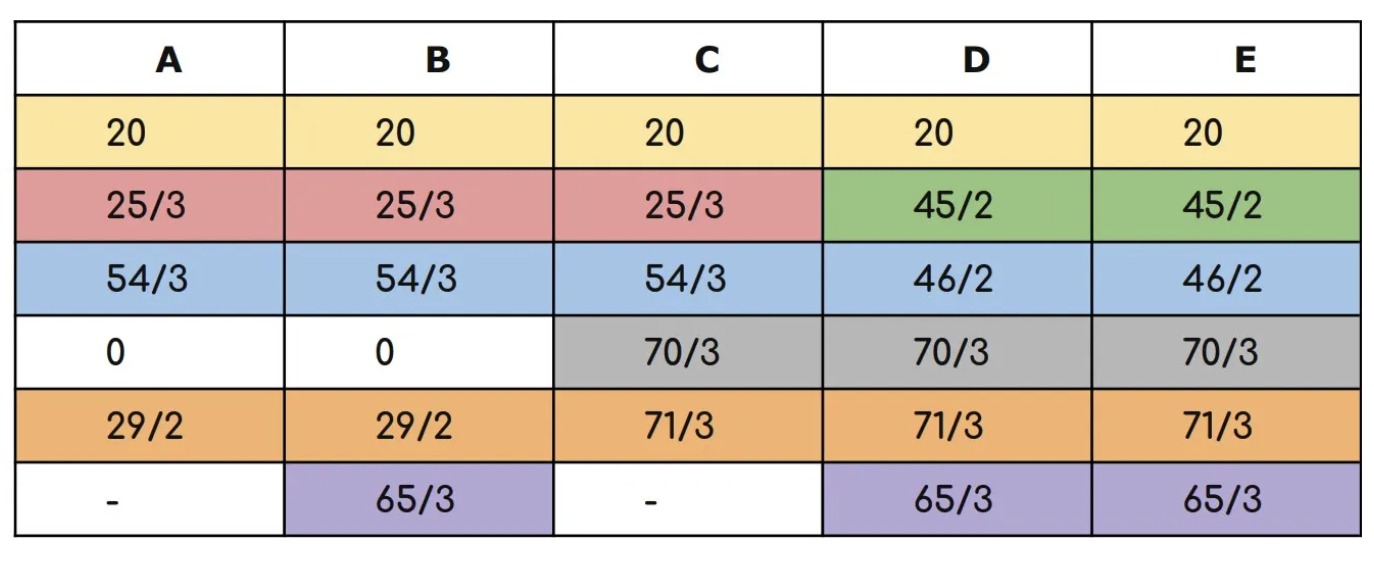

This is not a software upgrade you knock out over a weekend. A typical migration for a large enterprise costs $100–250M, spans 2.5 to 5 years, involves 10,000+ test cases and 30TB+ of data. Only 8–10% of these projects finish on time. Sixty-five percent report major quality issues. And Gartner projects that nearly half of all ECC customers still won't have migrated by 2027.

The consulting rates? Expected to spike 30–50% as the deadline closes in and the talent pool dries up. Demand for S/4HANA specialists could hit three times the available supply by next year.

The incumbents have a Kodak problem

Companies migrating their ERPs don't actually know if their data is correct. They don't know if their business processes are correctly implemented in the new system. And the way they find out today is almost entirely manual.

System integrators like Accentures, Deloittes, EYs deploy armies of consultants to write test cases by hand, validate business rules through front-end clicks, and run spreadsheet-based data checks. This work alone consumes 30%+ of project timelines and budgets. And even then, coverage is incomplete. Edge cases slip through.

The incumbents know AI can automate huge chunks of this. They have the data. They have the client relationships. They have the domain expertise. And yet — automating assurance work would cannibalise their own billable hours, the same billable hours that fund their quarterly earnings. A partner at a Big Four firm doesn't get promoted for shrinking headcount on a $200M engagement. They get promoted for growing it.

This is the Kodak trap, but in a suit. Protect today's revenue. Delay tomorrow's disruption. Hope someone else blinks first.

The window is open — but not for long

The biggest opportunities in enterprise AI right now are not in building another chatbot or another copilot for developers. They are in the unsexy, high-stakes operational plumbing that trillion-dollar companies depend on — migration assurance, data validation, process integrity, retrofit analysis — where manual work still dominates because the incumbents are too incentivised to keep it that way.

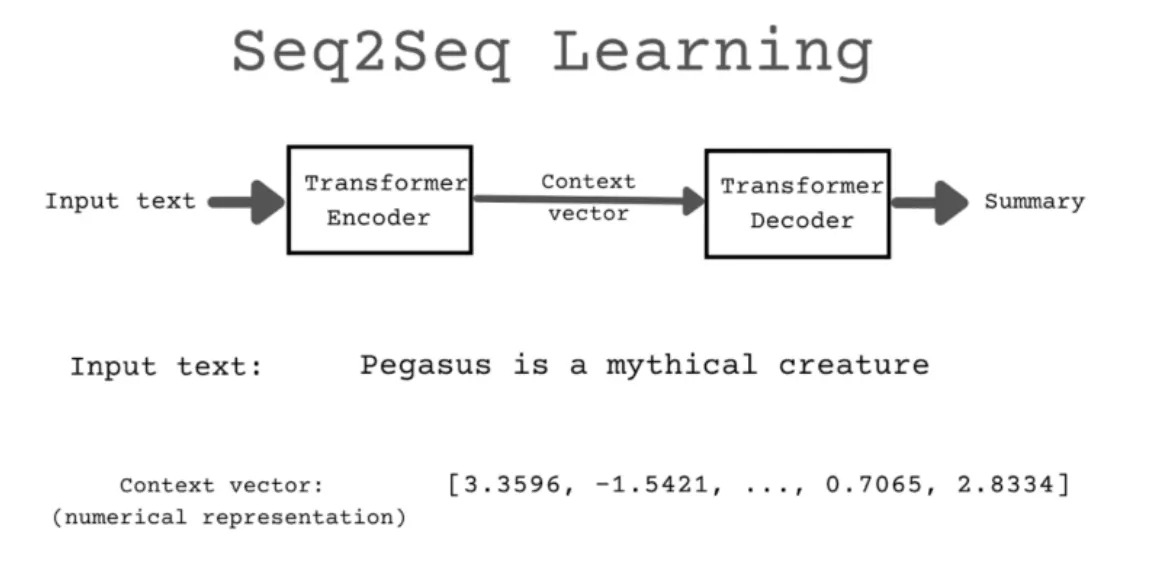

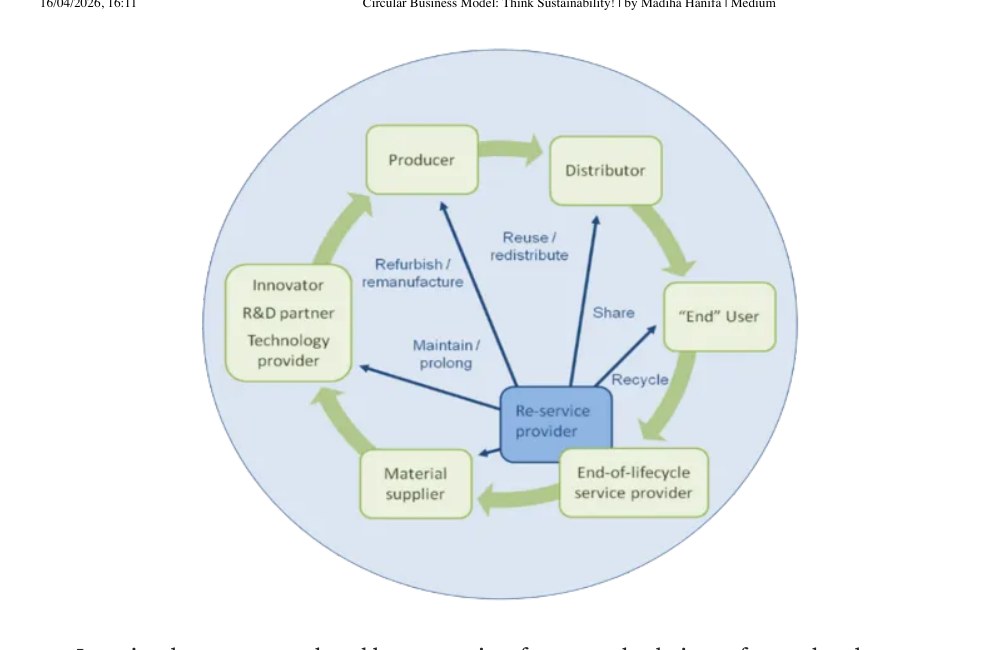

The emerging model: AI agents that ingest a company's business documents, flowcharts, code, and data definitions. They map how data flows across workflows, generate validation scenarios that used to take consultants weeks, run those checks continuously against live systems, and flag inconsistencies before they cause damage.

The market math backs it up. Business assurance for SAP S/4 migrations alone is an $18 billion opportunity. Expand to all ERP systems and it's $35 billion. Zoom out to AI-led IT services broadly and you're looking at $500 billion to $1 trillion by 2030.

The one thing that could go wrong

Accenture alone spends over $1 billion annually on AI and has publicly committed to training its entire workforce on generative AI tools. Deloitte, EY, and TCS are all building internal AI accelerators for SAP migrations. If even one of the Big Four decides to aggressively productise its validation workflows to cannibalise its own services revenue — the startup window narrows fast.

The bulls would argue that incumbents have had a decade to make this shift and haven't. That the incentive structure is too broken, the org charts too bloated, the partner economics too entrenched. But underestimating a trillion-dollar services industry that has survived every technology wave since mainframes would be its own kind of corporate myopia.

The clock is ticking either way

The 2027 deadline is not moving. Nearly half of SAP's customer base is still dragging its feet. The migration wave is guaranteed. The only open question is who captures the value — the lumbering giants who see the disruption coming but can't bring themselves to act, or the new entrants fast enough to build trust before the window shuts.

Kodak had 37 years between inventing the digital camera and filing for bankruptcy. The enterprise AI window is measured in months, not decades.

The company that invents the future doesn't always get to live in it. But the one that ships it fast enough just might.

read on medium ↗